AWS blog for your reference although it talks about converting CSV to Parquet, but you get the idea. We'll verify it as a workaround and I'll post back the results. Have you considered AWS Glue You can create Glue Catalog based on your Redshift Sources and then convert into Parquet. We'll try reloading the data with COPY and then re-export it with UNLOAD using the cast to double precision that you mentioned. Here are a few of the runtime options: -t: The table you wish to UNLOAD -f: The S3 key at which the file will be placed -s (Optional): The file you wish to read a custom valid SQL WHERE clause from. UNLOAD command is also recommended when you need to retrieve large result. This will also export a header row as well, something that is a little more complicated to undertake. maxfilesize ( float, optional) Specifies the maximum.

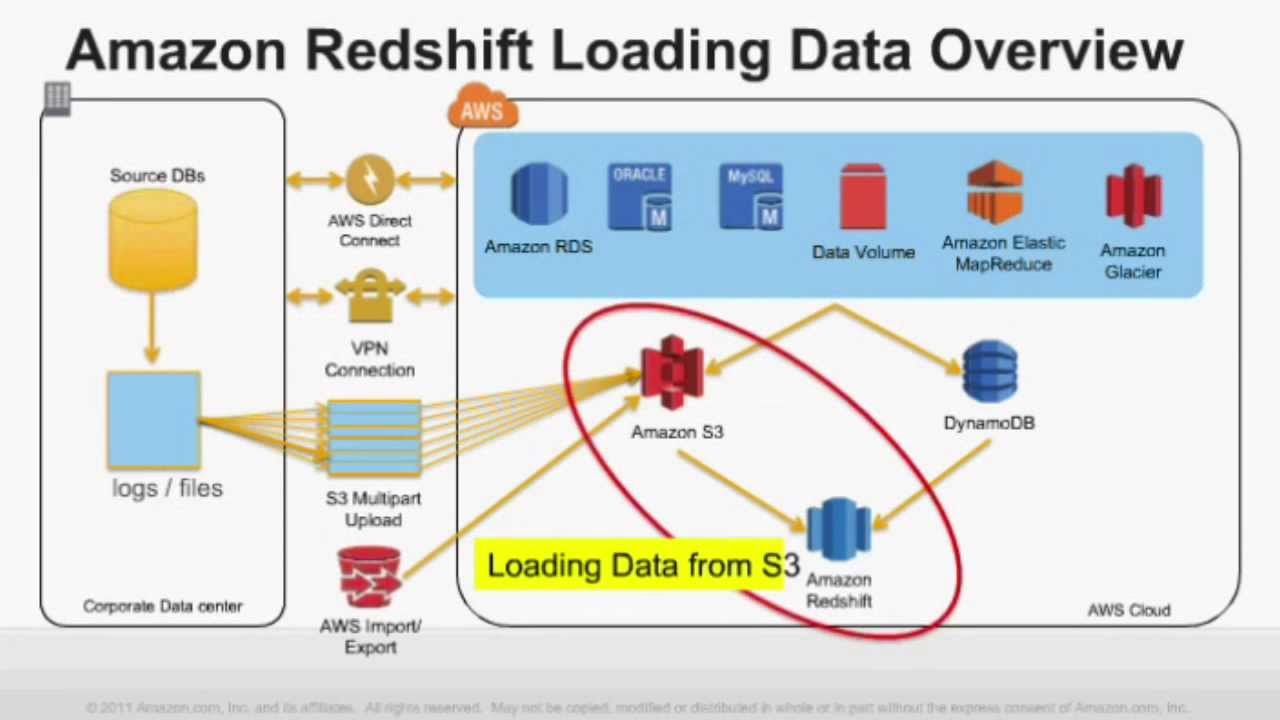

unloadformat ( str, optional) Format of the unloaded S3 objects from the query. Alternatively, you can specify that UNLOAD should write the results serially to one or more files by adding the PARALLEL OFF option. By default, UNLOAD assumes that the target Amazon S3 bucket is located in the same AWS Region as the Amazon Redshift cluster. With the UNLOAD command, you can export a query result set in text, JSON, or Apache Parquet file format to Amazon S3. Amazon Redshift splits the results of a select statement across a set of files, one or more files per node slice, to simplify parallel reloading of the data. ~6 weeks, because we're on the trailing track. Amazon Redshift features such as COPY, UNLOAD, and Amazon Redshift Spectrum enable you to move and query data between your data warehouse and data lake. So, we'll have to wait 2 release cycles, i.e. However, we're trying to get this fixed sooner than we'll the bug fix which in this thread seems like it may be in the next release cycle's current track release. You can see this coming ahead of time in what the Redshift events are telling you when you clusters will get patched, 2026 events that contain the words "system maintenance" mean an OS patch, and 2025 events that contain the words "database maintenence" mean Redshift software version upgrade. The last release cycle that we just got in us-east-1 this week (on the trailing track) included an OS patch too. Parquet format is up to 2x faster to unload and consumes up to 6x less storage in Amazon S3, compared with text formats. That is as long as the patch with the fix doesn't span an OS patch as well.

For information on using UNLOAD and the required IAM permissions, see UNLOAD. You can query these columns using Redshift Spectrum or ingest them back to Amazon Redshift using the COPY command.

This enables semistructured data to be represented in Parquet. You're probably correct about being able to get Redshift to downgrade a cluster to a version that has the bug. Amazon Redshift represents SUPER columns in Parquet as the JSON data type.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed